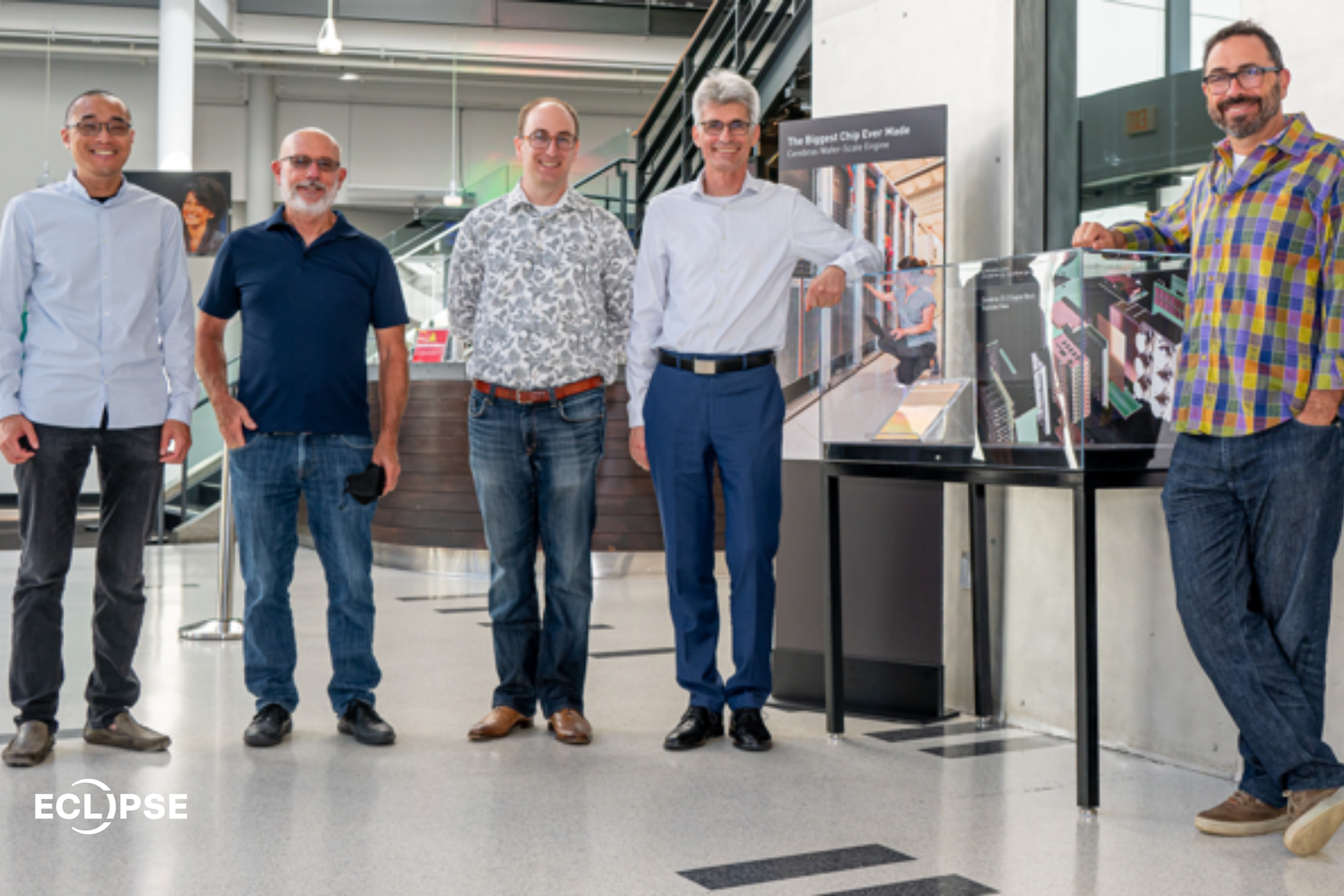

Today, Cerebras became a public company. This moment represents far more than a company milestone.

This IPO is a defiant confirmation that a once fringe idea is now a widely-held conviction: Cerebras has broken the core bottleneck to the AI revolution and become the defining force in the next great wave of economic and societal progress.

For years, the story of AI has been about its limitless potential: Accelerating medical discoveries, reinventing industries, and amplifying human capability.

The vision was never the problem; the mechanics of executing it were.

As models and ambitions grew, iteration cycles lagged, costs exploded, and entire clusters are needed to train a single model. We’d built the most powerful catalyst for advancement in human history but were trying to harness it with infrastructure never designed for it.

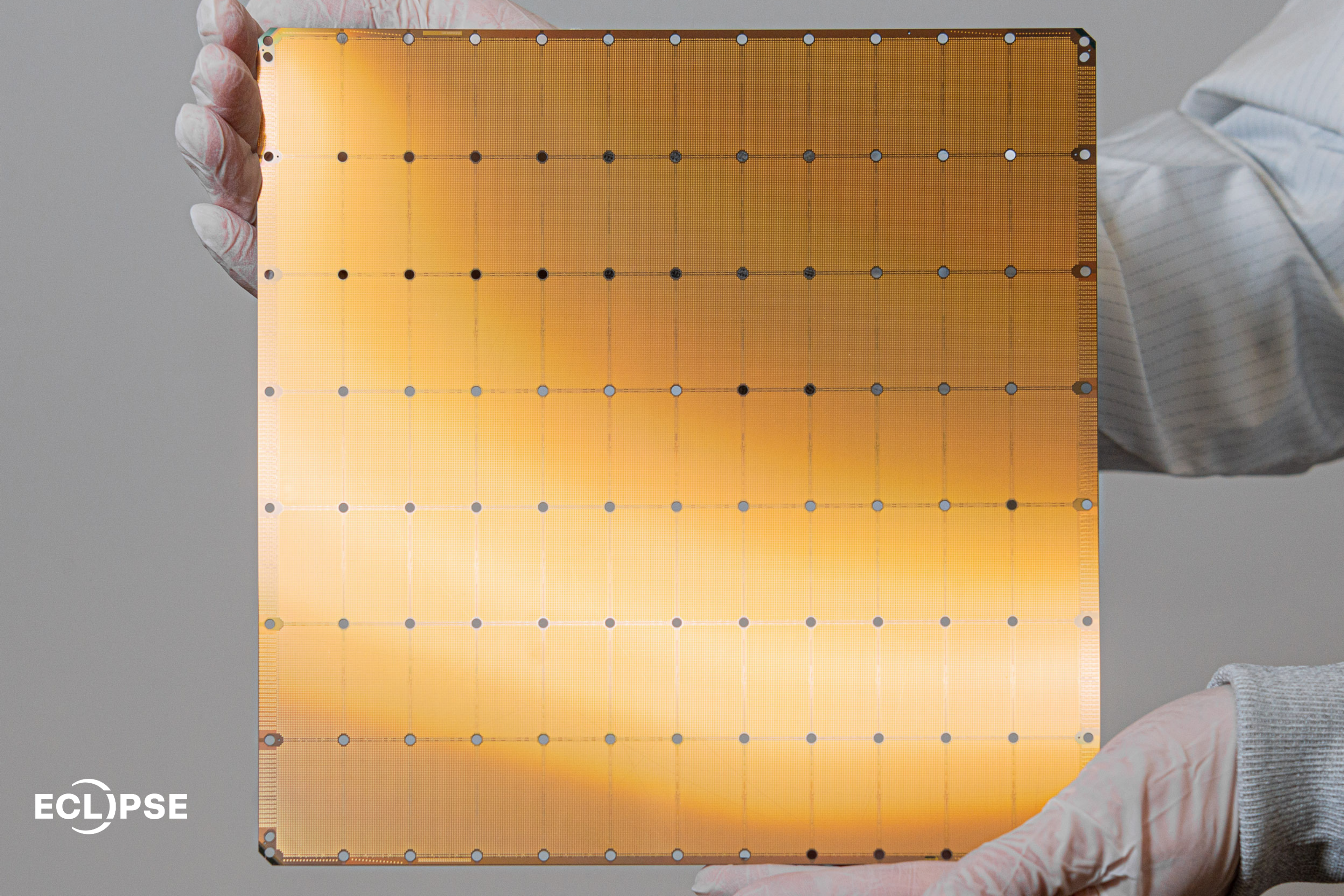

Andrew Feldman, Sean Lie and the early Cerebras team set out to break this barrier with wafer-scale integration — an engineering challenge the industry had spent 75 years saying could not be done. It was 2016, before the true AI boom, before the rise of LLMs, before GPU shortages made the news. But AI compute demand was already doubling 3.5 months while Dennard scaling was running out.